Class 5.1: Error propagation

Methodology of Scientific Research

Andrés Aravena, PhD

March 17, 2022

The aim of science is not to open the door to infinite wisdom, but to set a limit to infinite error

Bertolt Brecht, Life of Galileo (1939)

Measurements have uncertainty

Uncertainty of measurement is the doubt about the result of a measurement, due to

- resolution

- random errors

- systematic errors

Uncertainty must be declared

How big is the margin? How bad is the doubt?

We declare an interval: [xmin, xmax]

Most of the time we write x ± 𝚫x

Example: 20cm ± 1cm

Error versus uncertainty

Do not to confuse error and uncertainty

Error is the difference between the measured and the “true” value

Uncertainty is a quantification of the doubt about the result

Whenever possible we try to correct for any known errors

But any error whose value we do not know is a source of uncertainty

Combined uncertainty

Uncertainty of a single read

For a single read, the uncertainty depends at least on the instrument resolution

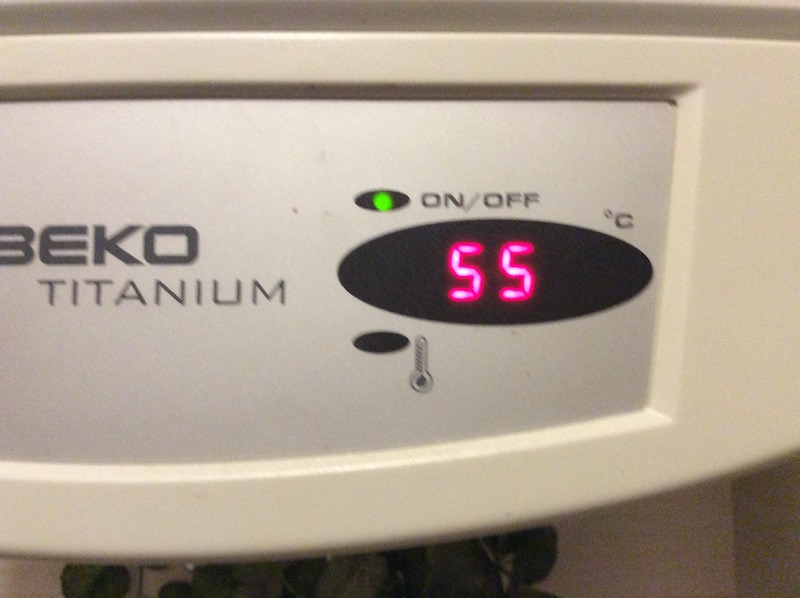

For example, my old water heater showed temperature with 5°C resolution: 50, 55, 60,…

Real vs measured value

If it shows 55°C, the real temperature is somewhere between 53°C and 57°C

We write 55°C ± 2.5°C

For a single read, 𝚫x = half of the resolution

Combining Uncertainty

Sum of two measurements:

\[(x ± 𝚫x) + (y ± 𝚫y) = (x+y) ±

(𝚫x+𝚫y)\]

Difference between measurements:

\[(x ± 𝚫x) - (y ± 𝚫y) = (x-y) ±

(𝚫x+𝚫y)\]

Multiplying Uncertainty

To calculate \((x ± 𝚫x) × (y ± 𝚫y)\) we first write the uncertainty as percentage

\[(x ± 𝚫x/x\%) × (y ± 𝚫y/y\%)\]

Then we sum the percentages:

\[xy ± (𝚫x/x + 𝚫y/y)\%\]

Finally we convert back to the original units:

\[xy ± xy(𝚫x/x + 𝚫y/y)\]

Proof

\[ \begin{aligned} (x ± 𝚫x) \times (y ± 𝚫y) & = x(1 ± 𝚫x/x) \times y(1 ± 𝚫y/y)\\ & = xy(1 ± 𝚫x/x)(1 ± 𝚫y/y) \\ & = xy(1 ± 𝚫x/x ± 𝚫y/y ± (𝚫x/x)(𝚫y/y)) \\ & = xy(1 ± 𝚫x/x + 𝚫y/y) \\ & = xy ± xy(𝚫x/x + 𝚫y/y)\\ \end{aligned} \]

We discard \((𝚫x/x)(𝚫y/y)\) because it is small

The easy rule for error propagation

\[ \begin{align} (x ± 𝚫x) + (y ± 𝚫y) & = (x+y) ± (𝚫x+𝚫y)\\ (x ± 𝚫x) - (y ± 𝚫y) & = (x-y) ± (𝚫x+𝚫y)\\ (x ± 𝚫x\%) \times (y ± 𝚫y\%)& =xy ± (𝚫x\% + 𝚫y\%)\\ (x ± 𝚫x\%) ÷ (y ± 𝚫y\%)& =x/y ± (𝚫x\% + 𝚫y\%)\end{align} \]

Here \(𝚫x\%\) represents the relative uncertainty, that is \(𝚫x/x\)

We use absolute uncertainty for + and -, and relative uncertainty for ⨉ and ÷

General formula

Assuming that the errors are small compared to the main value, we can find the error for any “reasonable” function

Taylor’s Theorem says that, for any derivable function \(f,\) we have \[f(x±𝚫x) = f(x) ± \frac{df}{dx}(x)\cdot 𝚫x + \frac{d^2f}{dx^2}(x+\varepsilon)\cdot \frac{𝚫x^2}{2}\] When \(𝚫x\) is small, we can ignore the last part.

Example 1

\[\begin{align} (x ±𝚫x)^2& = x^2 ± 2x\cdot𝚫x\\ & = x^2 ± 2x^2\cdot\frac{𝚫x}{x} \\ & = x^2 ± 2𝚫x\% \end{align}\]

Example 2

\[\begin{align} \sqrt{x ±𝚫x}& = \sqrt x ± \frac{1}{2\sqrt x}\cdot 𝚫x\\ & = \sqrt x ± \frac{1}{2}\sqrt x\cdot \frac{𝚫x}{x}\\ & = \sqrt x ± \frac{1}{2}𝚫x\% \end{align}\]

Exercise

Calculate the uncertainty in

The density of the stone ball

the Drake’s formula

the number of piano tuners in your city

Not all uncertainties are alike

Last class we only considered one kind of uncertainty: the instrument resolution

This is a “one time” error

- We notice it immediately

- It does not change if we measure again

My old water heater

My new water heater

Noise

There are other sources of uncertainty: noise

When the instrument resolution is good, we observe that the measured values change on every read

In many cases this is due to thermal effects, or other sources of noise

Usually the variability follows a Normal distribution

Example

Distribution of thermal noise

Real v/s measured data

Discretization uncertainty

Combined

How to combine

The exact distribution is hard to calculate

International standards suggest using computer simulation

They recommend Montecarlo methods

(what we did here)

It is the mark of an instructed mind to rest assured with that degree of precision that the nature of the subject admits, and not to seek exactness when only an approximation of the truth is possible

Aristotle (384–322 BC), Nicomachean Ethics